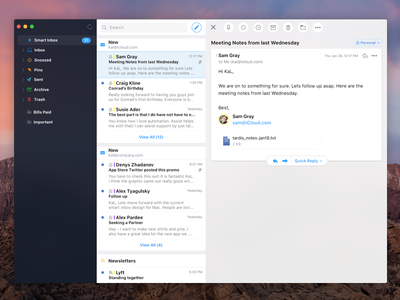

That’s it! Spark will automatically set up your Zoho Mail account on Mac and all your emails will be available to use on your Mac.ĭownload Spark for Free to start using your Zoho Mail email account on Mac. In the “Title” field, enter ‘Zoho Mail Account’ or anything else you prefer (optional).

At the top left of your screen, click on “Spark Desktop” > “Add an account…” or you can go to Spark's Preferences > Accounts and click on the Add Account button there.If you are already using Spark Mail app on your Mac and want to add your Zoho Mail account, simply follow these steps. How to Add Zoho Mail Email to Spark for Mac You can follow the steps detailed below to add additional Zoho Mail email accounts to Spark. That’s it! Your Zoho Mail account is now ready to be used with Spark for Mac. Click 'Log in' to start using your Zoho Mail email account with Spark.Next, fill out the Incoming Mail Server (IMAP) and Outgoing Mail Server (SMTP) details for your account, as provided by Zoho Mail support.On the next screen, enter your name, your Zoho Mail account password and click on Additional Settings.Type in your Zoho Mail email address on the welcome screen.If you’re already using Spark and want to add a Zoho Mail account, follow these instructions here instead. If you haven’t already, download and install Spark mail app on your Mac to get started.To learn more about loading models for specific runs, see Save and serve models. In the case of _udf(), you can use the -env-manager flag to recreate the environment during Spark batch inference.) (This is described in detail in Environment Management Tools. Those commands also accept an -env-manager option for even more control. If you serve your model with mlflow models serve, MLflow will automatically recreate the environment. To do so, you need to use your preferred method ( conda, virtualenv, pip, etc.), using the artifacts saved by log_model. Note that while log_model saves environment-specifying files such as conda.yaml and requirements.txt, load_model does not automatically recreate that environment. An issue that was breaking the helpers path and. We fixed the issue with freezes after resuming the debugging process, so it now works smoothly.

Using Alt + Shift + Enter (or Option + Shift + Enter for Mac), you can do cell-by-cell debugging. load_model ( "runs:/d7ade5106ee341e0b4c63a53a9776231" ) predictions = model. Other significant fixes in 2020.3.2 include: P圜harm supports debugging for Jupyter notebooks. Import mlflow from sklearn.model_selection import train_test_split from sklearn.datasets import load_diabetes db = load_diabetes () X_train, X_test, y_train, y_test = train_test_split ( db. ( mlflow.start_run() API reference) For example: You get that object by wrapping all of your logging code in a with mlflow.start_run() as run: block. For that, you’ll need the object of type mlflow.ActiveRun for the current run. In addition, if you wish to load the model soon, it may be convenient to output the run’s ID directly to the console. Import os from random import random, randint from mlflow import log_metric, log_param, log_params, log_artifacts if _name_ = "_main_" : # Log a parameter (key-value pair) log_param ( "config_value", randint ( 0, 100 )) # Log a dictionary of parameters log_params (.log_model. This example demonstrates the use of these functions: Mlflow.log_artifacts, mlflow.log_image, mlflow.log_text Values updated during the run (for instance, accuracy)įiles produced by the run (for instance, model weights) In addition, or if you are using a library for which autolog is not yet supported, you may use key-value pairs to track:Ĭonstant values (for instance, configuration parameters) fit ( X_train, y_train ) # Use the model to make predictions on the test dataset. 0:00 / 1:56 How to setup spark on Mac M1 using IntelliJ Apple Silicon Native JDK 228 views Setup spark environment on Mac M1 using native IntelliJ & JDK.more. rf = RandomForestRegressor ( n_estimators = 100, max_depth = 6, max_features = 3 ) rf. autolog () db = load_diabetes () X_train, X_test, y_train, y_test = train_test_split ( db. Import mlflow from sklearn.model_selection import train_test_split from sklearn.datasets import load_diabetes from sklearn.ensemble import RandomForestRegressor mlflow.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed